Importance of Edge Computing

Edge computing is the practice of processing data closer to where it is generated, such as on local devices, gateways, or regional servers, rather than sending everything to a central cloud. Its importance today comes from the need for faster responses, reduced bandwidth use, and improved reliability in environments where connectivity is limited or latency is unacceptable. With the growth of IoT, AI, and real-time applications, edge computing has become a critical complement to cloud systems.

For social innovation and international development, edge computing matters because many communities operate in areas with low bandwidth or intermittent connectivity. Processing data locally allows mission-driven organizations to deploy AI-powered tools that remain useful even without constant internet access, supporting equity and inclusion in digital transformation.

Definition and Key Features

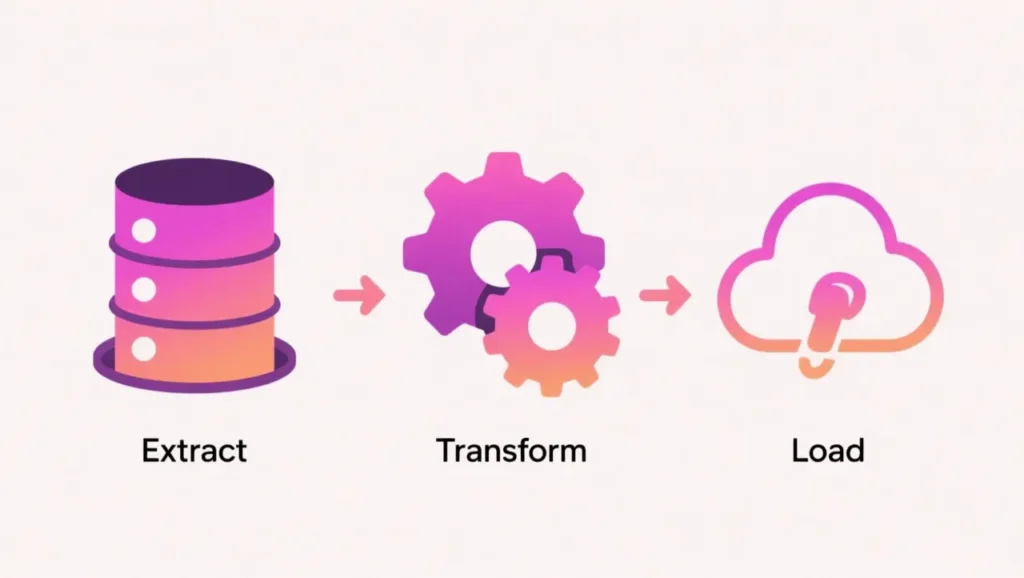

Edge computing shifts workloads from centralized data centers to devices or servers near the data source. Examples include running analytics on smartphones, processing video streams at local hubs, or deploying AI models on sensors and embedded systems. By reducing the distance data must travel, edge systems lower latency and improve resilience.

It is not the same as cloud computing, which centralizes storage and processing in large data centers. Nor is it equivalent to offline systems, since edge computing often integrates with cloud services to synchronize data or models when connectivity is available. Instead, it is a distributed architecture designed for responsiveness and adaptability.

How this Works in Practice

In practice, edge computing requires balancing local processing power, energy consumption, and connectivity. Lightweight AI models are often deployed at the edge to enable real-time decision-making, with heavier processing handled by the cloud when possible. Examples include edge gateways that aggregate IoT data, or mobile apps that use on-device inference to provide instant predictions.

Challenges include limited hardware resources, security risks at distributed endpoints, and the complexity of managing updates across many devices. Advances in hardware acceleration and federated learning are making it easier to train and deploy models at the edge, while synchronization mechanisms ensure consistency with central systems.

Implications for Social Innovators

Edge computing enables mission-driven applications that must work reliably in the field. Health initiatives use it to run diagnostic models on handheld devices in rural clinics. Education platforms deploy offline-first learning apps that update when internet access becomes available. Humanitarian agencies process drone or satellite data at the edge to generate immediate situational awareness during crises. Civil society groups use edge systems to collect and analyze local feedback before syncing with larger platforms.

By bringing computation closer to the source, edge computing allows organizations to deliver timely, resilient, and inclusive digital services in diverse and resource-constrained environments.